Bulkhead pattern

Need(s)

Our distributed system include multiple services; each service having one or more consumers. Excessive load or failure in a service will impact all consumers of the service. Moreover, a consumer may send requests to multiple services simultaneously, using resources for each request. When the consumer sends a request to a service that is misconfigured or not responding, the resources used by the client's request may not be freed in a timely manner. As requests to the service continue, those resources may be exhausted. For example, the client's connection pool may be exhausted. At that point, requests by the consumer to other services are impacted. Eventually the consumer can no longer send requests to other services, not just the original unresponsive service. The same issue of resource exhaustion affects services with multiple consumers. A large number of requests originating from one client may exhaust available resources in the service. Other consumers are no longer able to consume the service, causing a cascading failure effect.

Bulkhead pattern definition

To explain this pattern, we can take the example of the sectioned partitions of a ship's hull. If the hull of a ship is compromised, only the damaged section fills with water, which prevents the ship from sinking. This kind of partitioning approach is used in microservice architecture to isolate failure to small portions of the system. The service boundary serves as a bulkhead to isolate any failures. Breaking out functionality into separate services isolate the effect of failure in the service itself. But this isolation is not enough because our services consume each other. With each service having one or more consumers. Excessive load or failure in a service will impact all consumers of the service.

Some implementation of Bulkhead pattern resolve the problem by limiting the number of concurrent calls to a component. This way, the number of resources (typically threads) that is waiting for a reply from the component is limited.

Assume we have a request based, multi threaded application (for example a typical web application) that uses three different components, A, B, and C. If requests to component C starts to hang, eventually all request handling threads will hang on waiting for an answer from C. This would make the application entirely non-responsive. If requests to C is handled slowly we have a similar problem if the load is high enough. Limiting the number of concurrent calls to a component will save the application in this case. Assume we have 30 request handling threads and there is a limit of 10 concurrent calls to C. Then at most 10 request handling threads can hang when calling C, the other 20 threads can still handle requests and use components A and B.

Integration with other patterns

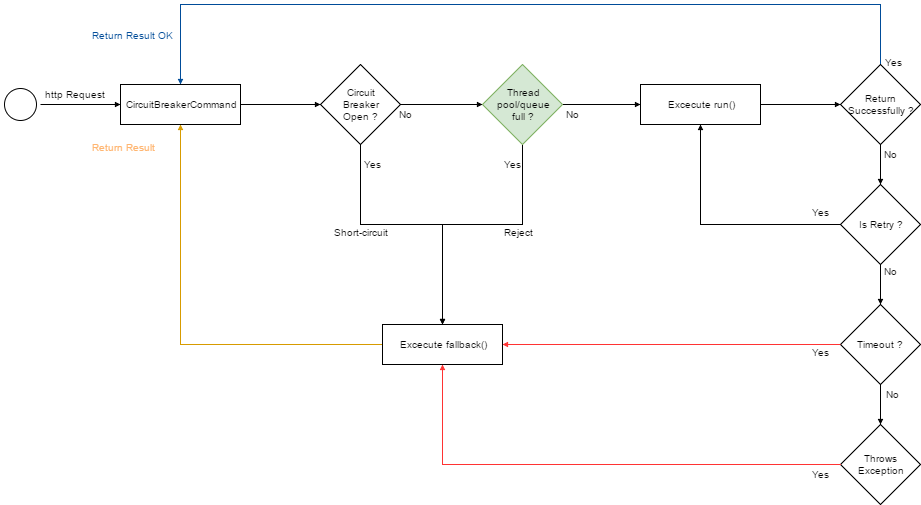

Using Bulkhead pattern with Circuit breaker pattern1,Retry pattern2 and Fallback pattern3 , we will have this workflow:

1. “Circuit breaker pattern”, This content ↩

2. “Retry pattern”, This content ↩

3. “Fallback pattern”, This content ↩